Blog

Metal Gear Standard: How OpenSFF can help dedicated server providers

Introduction

The data center market is booming, and providers are barely keeping up. By the end of 2025, the overall vacancy rate in US primary markets fell to a record low 1.4%, with some markets such as Northern Virginia sitting below 1%. Providers are unable to build capacity fast enough to keep pace with demand. We are well aware that much of that demand is being driven by AI inference. But dedicated server providers face persistent challenges regardless of the workload. In this article, we will explore the current state of bare metal hosting and how our open modular standard can help.

Modern server provider hardware

The majority of dedicated server providers use 1U and 2U rackmount systems. Providers prefer those standard form factors because they are easy to procure from multiple vendors, straightforward to automate or process around, and relatively simple to replace.

Hetzner operates hundreds of thousands of servers across Germany, Finland, the US, and Singapore. Their lineup comprises custom-built desktop PC-style servers and Dell PowerEdge prebuilts, with processors ranging from consumer Intel Core CPUs to AMD EPYC processors.

Leaseweb on the other hand appears to focus on prebuilts from major vendors such as Dell, HP, and Supermicro, but they still standardize on 1U and 2U servers.

OVHCloud, which has over 450,000 servers across 4 continents, is a notable outlier. The provider has been assembling its own servers almost since its inception. That vertical integration led to significant density and efficiency gains over decades of iteration. Their designs pack 48 servers into just four custom racks that occupy a little over 32 sq.ft. and use up to 160kW of power. This gives OVHCloud a server density of around 5000W per sq. ft., a figure that the company claims is better than 90% of its competitors. In 2024, the company launched its Bare Metal Pod, an autonomous unit that occupies only 24U.

While blade servers have offered density and shared infrastructure for decades, they are a poor fit for the business model of dedicated server providers. Blade chassis are lock-in traps. Switching vendors would mean replacing everything: blades, drives, rails, and management platforms. The power demands of server CPUs have also pushed against the thermal limits of many blade designs. Finally, the architecture’s key advantage is itself a dealbreaker for dedicated server providers. A power or cooling fault can take down all nodes in the chassis, a problem that turns into an even bigger disaster when we consider that a server provider would rent out those nodes to different customers.

Persistent provider pain points

Regardless of their infrastructures’ sophistication and the type of workloads that arise, dedicated server providers have been eternally faced with provisioning and lifecycle issues.

Hetzner, Leaseweb, OVHcloud, and other major dedicated server providers have considerably raised the bar on provisioning through decades of experience. They offer configurations that can be ready within minutes or an hour of ordering. However, that speed depends on having standardized hardware. Different generations, providers, and firmware baselines inevitably stack and complicate operations.

Forecasting server lifecycle is a much harder task. The bare metal automation experts at RackN summarized it best in one of their blog posts: “hardware has identity and history.” Firmware updates may not always be applied consistently. BIOS settings vary and drift over time. Vendor-specific tooling also behaves differently across generations.

Further, as cloud provider Scaleway points out, the consequences of wear and tear are not linear. The interaction between the processor, memory, storage, and other components makes it difficult to document, assess, and predict hardware health. OVHcloud proves that standardization makes server maintenance and optimization easier. An open and modular standard could help all providers enjoy those same benefits.

What OpenSFF enables

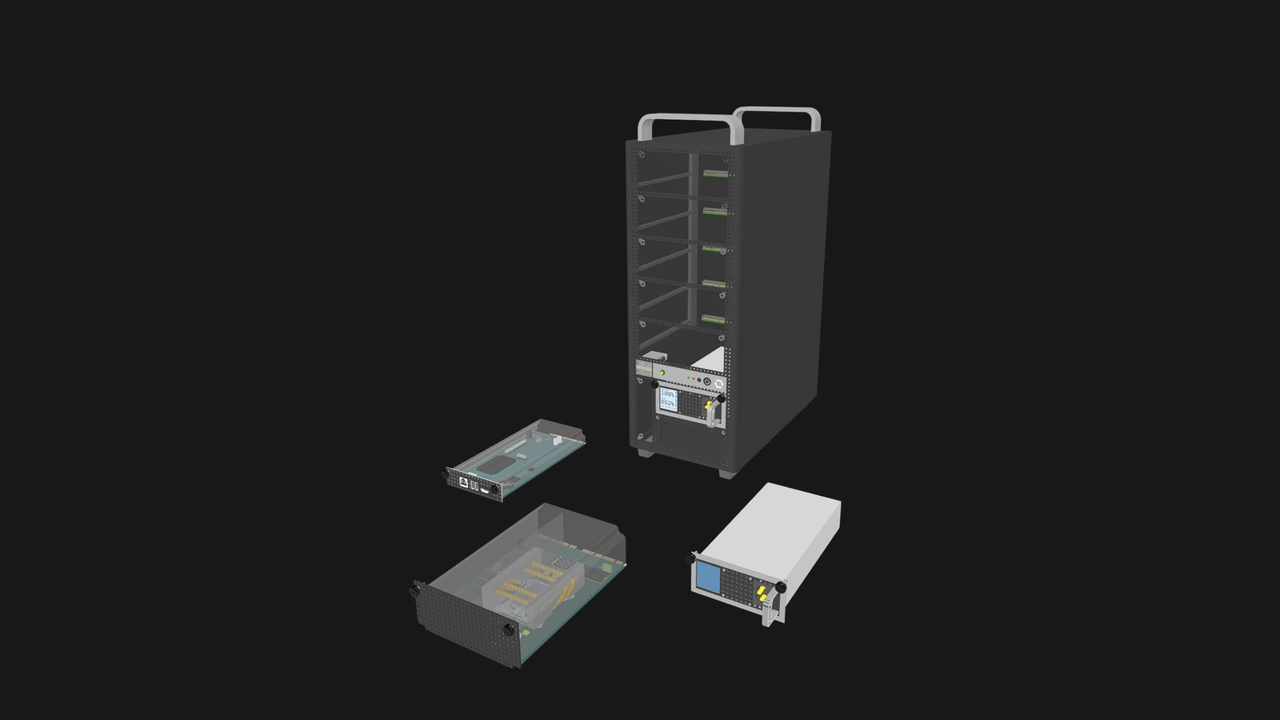

Our specifications allow vendors to offer solutions that combine the density and efficiency of traditional blade architecture with the vendor-neutral form factor of desktop systems.

Enterprise Enclosures provide centralized power delivery, internal and dedicated networks for general purpose traffic and out-of-band management, and a dedicated Management Module slot. Yet the three main components—the Compute Node, the Enclosure, and the Management Module—are independently replaceable and vendor-neutral. Any compatible Compute Node or Management Module will work in any compatible Enclosure.

Enterprise-class systems have various features in place that protect against chassis-wide failure points. Enterprise Enclosures must have support for redundant PSU configurations. If one or more Compute Nodes are not receiving adequate cooling, the Management Module can override the Enclosure settings and push all fans to their maximum setting. The Enclosure microSD Card streamlines Management Module replacement and Enclosure migration. Our default Management Module software accounts for multi-tenant scenarios. Besides separating the platform administrator from node administrators, we believe it is possible to assign multiple Management Modules to blocks of Compute Nodes in specialized Enclosures.

Speaking of which, we came up with a design that explores how our standard might be implemented at the scale that dedicated server providers care about.

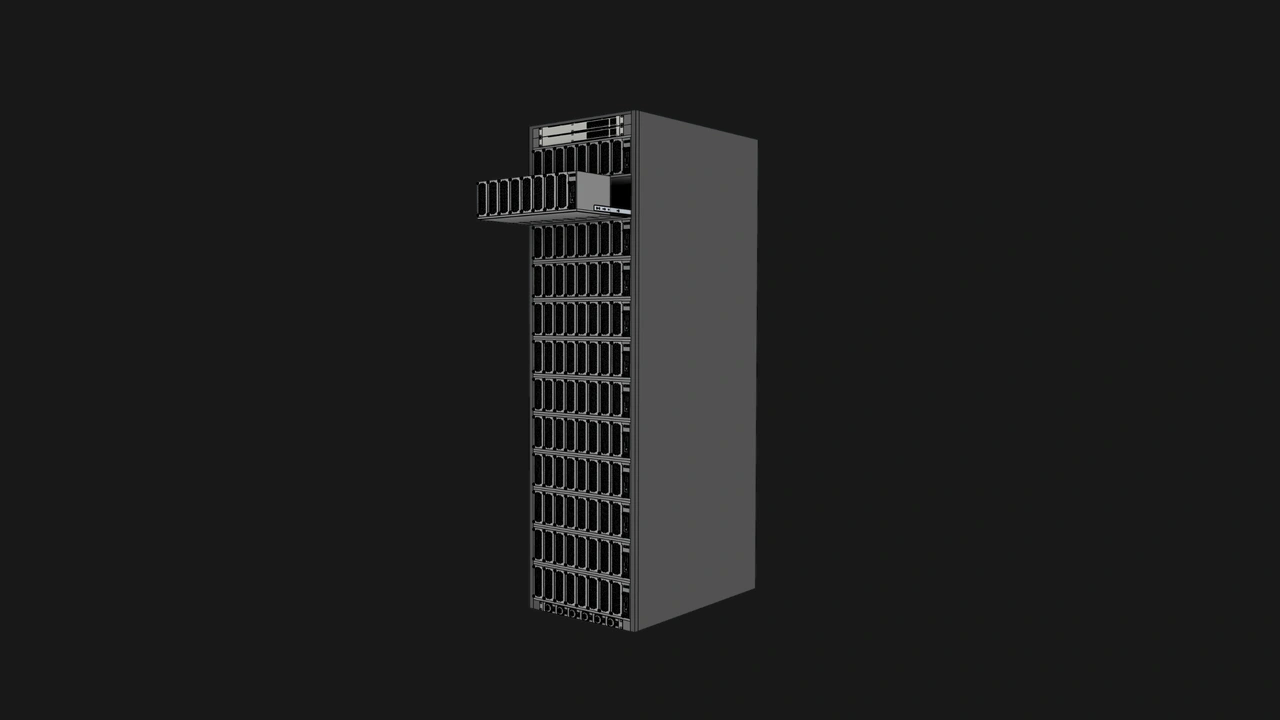

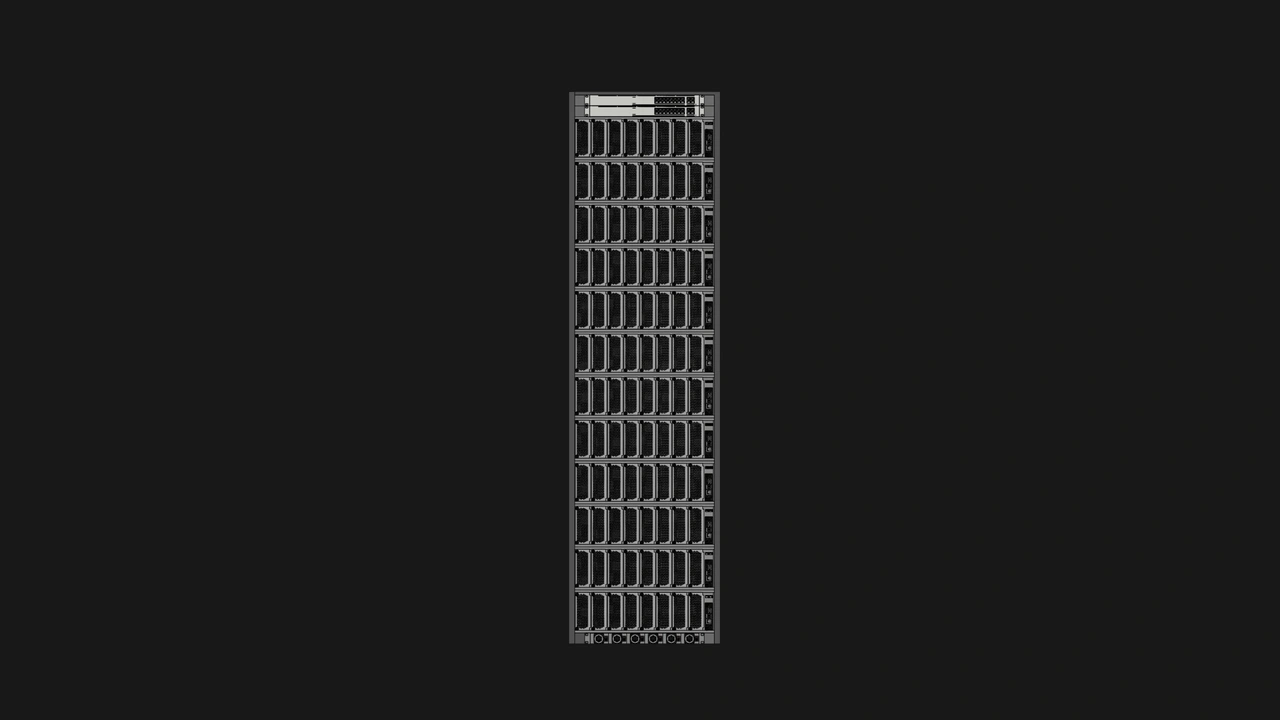

192 nodes in 50U

This concept involves an Enterprise Enclosure in the form of an open rack with 50U of space. Each row would be 24” wide to accommodate rails and internal cable routing, but the Enclosure itself would still be within the footprint of a typical 50U cabinet. Compute Nodes would be mounted vertically on both interior faces of the Enclosure: eight Compute Nodes side by side as one block, 12 blocks per face. That gives us 96 Compute Nodes per face for a total of 192 nodes.

For networking, each face would have two 16-port 10GbE switches. To meet our Ethernet requirements, we can route 10GbE uplinks from each block of Compute Nodes to the 16-port switches. Each block would have two uplink ports, and each of those ports would be connected to one of the two switches, leaving four ports free per switch. Aside from redundancy, dedicated server providers could connect an inter-switch link to one of those free ports and connect the two switches. This way, if an uplink on one switch fails, traffic can be routed through the other switch.

For remote management and IP-KVM, vendors can go with a single full-featured Management Module for the entire system, or design the Enclosure to support multiple Management Modules, perhaps one per block as shown in the renders in this section.

At our 120W maximum power target per Compute Node, 192 nodes would draw up to approximately 23kW at full load. Even after we add 300W for the switches, the system’s total power budget would still be well within range of what existing bare metal configurations already support.

For cooling, our design uses a bottom-up airflow path. Cool air is drawn in the Enclosure’s central channel by intake fans at the base, then directed through each Compute Node’s thermal solution by dedicated fans.

Build with OpenSFF

Dedicated server providers have complicated and longstanding challenges regardless of their customers’ workload. Our specifications allow vendors to provide standardized solutions for all providers, or offer specialized Enclosures while still preserving the interoperability of Compute Nodes and Management Modules.

With OpenSFF, providers can enjoy density and efficiency without committing their strategy to a single vendor’s products, capacity, or roadmap. We are working hard to define a stable foundation that vendors can scale and configure to meet a wide range of user demands.

We invite you to read our specifications, and we would be grateful if you spread the word about OpenSFF. For technical clarifications, collaborations, and other inquiries, reach out to our development team at [email protected].

Other Articles

Meet OpenSFF: an open hardware standard that enables cross-vendor compatibility, modular systems, and sustainable hardware reuse.

August 11, 2025

We go over the rise of virtualization and the open software adopted by home server enthusiasts, as well as the current challenges and the future of the hobby.

September 06, 2025

Learn why OpenSFF adopted the SFF-TA-1002 connector standard and how it enables our vision.

September 18, 2025