Blog

Optional Opportunities: How OpenSFF Gives Vendors Freedom to Innovate and Stand Out

Introduction

Our specifications establish clear requirements for interoperability. For instance, OpenSFF Compute Nodes have fixed dimensions: 215mm x 150mm x 60mm. Management Modules have the same length but are only 110mm wide and 30mm tall. Both components are secured by M4 captive thumbscrews for tool-less retention.

We adopted the SFF-TA-1002 connector standard to carry power, high-speed signals, and management communications on a single interface. We also define the Enterprise Enclosure, which must provide internal networking, out-of-band management infrastructure, and support for redundant power.

However, the aspects that our standard does not dictate are equally important. Connectivity options, Core Enclosures, component selection and layout, ruggedness, and thermal design are all left to vendors. These create opportunities for differentiation without sacrificing compatibility. A compact desktop Enclosure and a weatherproof industrial Enclosure can host the same Compute Node while being optimized for their intended use cases.

Let us take a closer look at these aspects and how vendors can take advantage of them to create a diverse OpenSFF ecosystem.

Connectivity

The Compute Node specification defines a minimum set of I/O: two Ethernet ports, a DisplayPort output, and USB connectivity through the Core Connector. The Enterprise Connector must provide at least two more Ethernet ports and an additional USB-C port on Compute Nodes equipped with it. But vendors are free to expand and enhance these ports.

The I/O shield offers plenty of real estate for additional ports. A vendor targeting home or office users could add antenna cutouts for Wi-Fi and Bluetooth. Meanwhile, a Compute Node meant for digital signage or kiosks could be given a secondary DisplayPort output for display mirroring.

Additional USB ports on the I/O shield can also enable convenient local servicing without the need for network access. Vendors may even add an easily removable M.2 tray or hot-swappable EDSFF drives for fast and tool-less drive replacement.

Future revisions of our specifications may also integrate additional connectivity options, such as an x1 PCIe 4.0 lane. This expansion would allow for more connectivity options and specialized devices. For instance, a basic NAS device can be designed using a Core Enclosure. It would have several SATA or SAS drive bays that can be accessed by the Compute Node via the PCIe lane.

Core Enclosures

As we discussed in previous articles, Core Enclosures are the most flexible component of OpenSFF. Unlike Enterprise Enclosures that have strict node management and power delivery requirements, Core Enclosures can be practically anything that can properly host, power, and cool one or more Compute Nodes.

These minimal requirements allow vendors to design a variety of purpose-built systems, from workstations to edge appliances. For instance, a Core Enclosure can be configured to be a self-contained test bed for evaluating Compute Nodes or Management Modules, perhaps with a built-in display to show benchmark results and status information. Test data and logs could be made accessible through the management interface.

Core Enclosures can also selectively adopt Enterprise-class features. A Core Enclosure meant for small business servers could support the Management Module and have a Private Enclosure Network (an out-of-band management network for remote access), but omit redundant power delivery. Perhaps a multi-node Core Enclosure could have only one or two of its slots equipped with an Enterprise Connector, providing users with additional connectivity while maintaining manageable dimensions, complexity, and price.

Components

Vendors are free to choose the internal components of their implementations and arrange them as they see fit. For example, our Compute Node and Management Module specifications do not mandate storage configurations. Vendors can provide on-board eMMC for thin clients or multiple M.2 slots for workstations. Management Modules can have an SD card slot or come with an NVMe drive.

Processors can also provide opportunities for optimization and differentiation. Vendors can design Compute Nodes around low-power CPUs for always-on edge devices or thin clients. High-performance APUs can be chosen for premium variants. Vendors can go the mini PC route and offer soldered processors to maximize space and cooling, or opt for a CPU socket for more serviceable Compute Nodes. Compute Nodes can even house carrier boards to cater to highly specialized industries.

When it comes to Enclosures, vendors targeting medium-scale workloads can integrate managed switches, or ones that offer speeds beyond the 2.5GbE minimum. An Enclosure designed for remote installations could incorporate Power over Ethernet (PoE) to power connected devices.

Vendors can also offer convenient or premium physical interfaces on Enclosures apart from the required power and reset buttons and status indicator. They can add additional LEDs for diagnostics or even a display to easily view detailed node or network information. Vendors are also free to play with the Enclosure’s aesthetics and materials to marry form with function.

Mechanical and electrical robustness

Our standard mandates reliability in typical indoor environments. Compute Nodes and Management Modules are specifically required to operate under the conditions stated in ISO 14644-1 Class 9. But there is nothing preventing vendors from going beyond this baseline.

Durable components and systems are well-suited for industrial and edge deployments. Vendors can create Compute Nodes and Enclosures that meet high IP ratings for dust and moisture resistance. They may also pre-certify their implementations for specific industrial environments, or offer enhanced shock and vibration tolerance beyond the industry standards that are mentioned in our specifications.

Vendors can also use protective coatings as differentiators. They can create corrosion-resistant Compute Nodes, or enhance the I/O shield to be even more resistant to dust. Vendors can also choose internal components that are rated for extended temperature ranges.

When properly implemented, these optional enhancements will not compromise our standard’s interoperability and compatibility. A ruggedized Compute Node still has the same dimensions, connector, and retention mechanism as a basic Compute Node. It is specialized without becoming a completely custom or proprietary hardware that works only on one or a handful of systems.

Thermal design and solutions

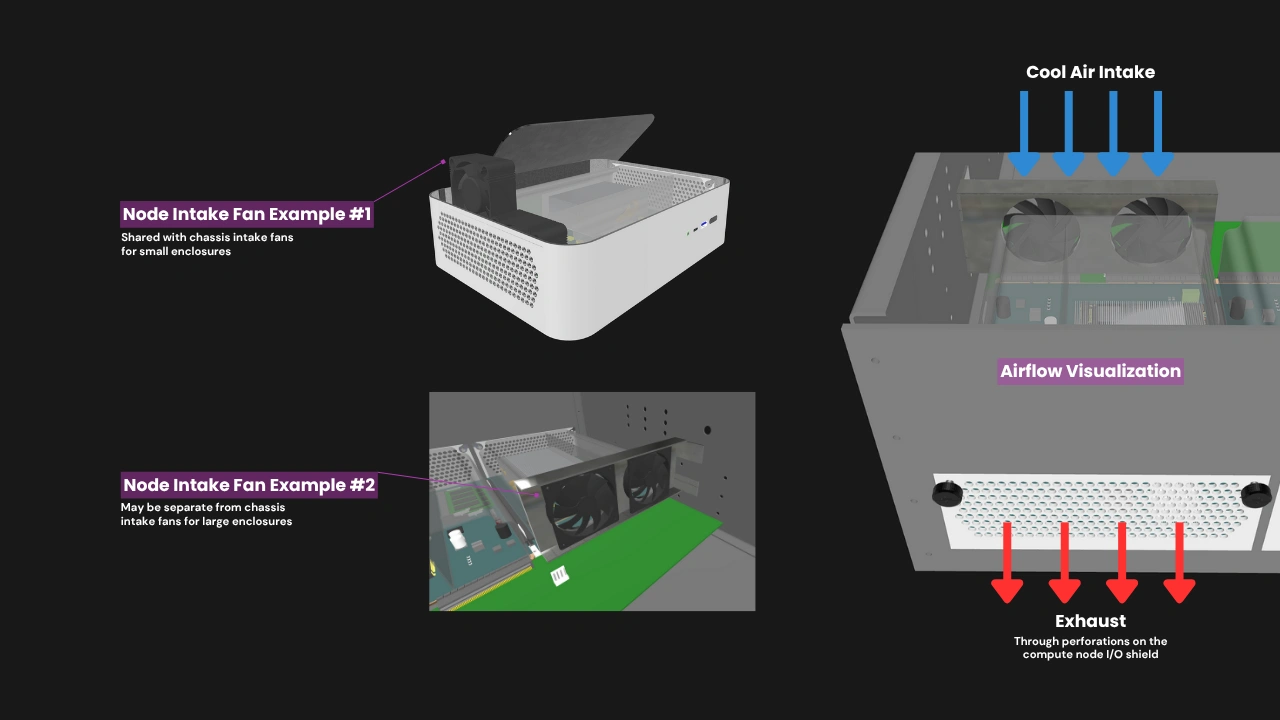

OpenSFF defines operating temperatures and airflow rates, but vendors are free to determine how they want to meet these requirements within the mechanical envelope. For instance, the Compute Node’s dimensions account for an air shroud that directs air through the node components and out through the perforations in the I/O shield. But a Compute Node with a high-performance processor can be also supported by equally sophisticated cooling solutions such as vapor chambers, heat pipes or extended heatsink assemblies that fit within the hardware’s dimensions.

The thermal design of Enclosures also offers vendors plenty of room for innovation and creativity. Fan configuration and form factor, airflow routing, and overall cooling capacity can be optimized for the Enclosure’s intended audience or use case. A vendor specializing in desktop Enclosures might opt for large and slow fans for quiet operation, while a vendor focusing on dense multi-node Enclosures could go for numerous medium-sized high-speed fans. Niche solutions such as cross flow fans can be utilized for compact systems.

Build with OpenSFF

Our standard defines the floor, not the ceiling. We establish clear requirements for mechanical dimensions, connectors, electrical interfaces, and thermal limits in the name of interoperability. Everything else is an opportunity. We aim to become a stable foundation for multi-node systems the same way that ATX has anchored a diverse PC ecosystem for decades.

This freedom enables vendors to segment their products depending on price, purpose, operating environment, or performance. But a budget-oriented Compute Node and a premium variant will still work in the same Enclosure. A compact desktop Enclosure and a ruggedized one can both host the same Compute Node. Our standard rewards excellence in execution and innovation without holding the market back with proprietary restrictions.

These opportunities also make OpenSFF futureproof. As new protocols are defined, as processor architectures evolve, and as use cases multiply and converge, vendors can adapt their implementations while preserving interoperability and access to a wide customer base.

We encourage you to read our specifications, and we would be grateful if you help spread the word about OpenSFF. For technical clarifications, partnerships, and other inquiries, reach out to our development team at [email protected].

Other Articles

Meet OpenSFF: an open hardware standard that enables cross-vendor compatibility, modular systems, and sustainable hardware reuse.

August 11, 2025

We go over the rise of virtualization and the open software adopted by home server enthusiasts, as well as the current challenges and the future of the hobby.

September 06, 2025

Learn why OpenSFF adopted the SFF-TA-1002 connector standard and how it enables our vision.

September 18, 2025