Blog

The Box is The Lock: Architectural Lock-in in Modular Computing

Introduction

For IT administrators, evaluating a server platform is more than just about looking at its spec sheet. Whether you are planning a new setup or looking to upgrade, your organization’s imminent purchase will also dictate your future options.

Blade servers and other modular platforms are deliberately engineered to tightly couple compute, housing, networking, and management. This strict unified approach does have benefits, but they also constrain you on several levels. Understanding whether the tradeoffs are acceptable given your organization’s present and future needs is not an easy task, but it is worth the time.

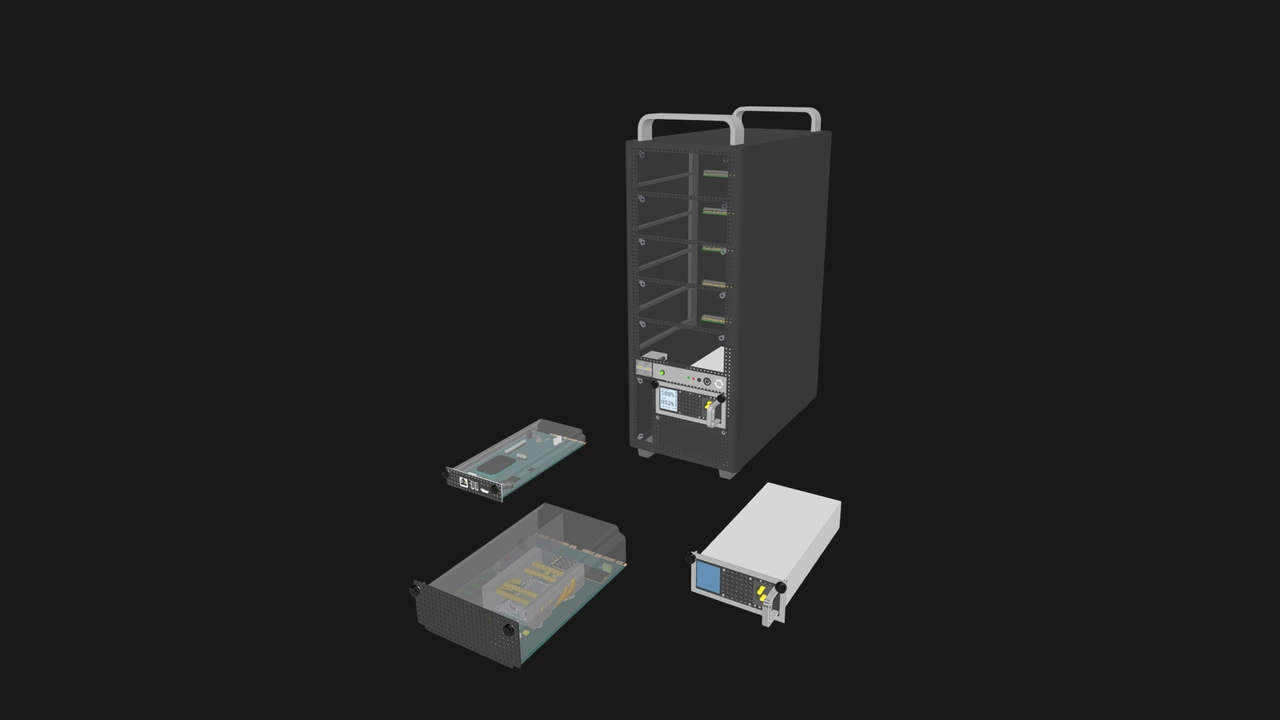

In this article, we are going to look into three categories of vendor lock-in in major modular server platforms, both in terms of what vendors offer and what they mean in practice. Then we will present how OpenSFF breaks away from these constraints, presenting vendors with a set of design choices that start from different assumptions.

Physical and mechanical lock-in

Let us start with the most visible form of vendor lock-in. Blade dimensions, backplane or midplane connectors, mezzanine card form factors, and numerous other components are all vendor defined and non interchangeable between platforms.

This total control and integration has resulted in genuinely practical innovations. The air-dielectric connectors in Dell’s M1000e enclosure have staggered columns to minimize crosstalk. Cisco’s UCS 5100 series chassis have a modular network architecture. Users can choose from a variety of modules to create a customized central switching setup. Supermicro turned the lack of standardized blade dimensions into a differentiator: Customers can fit 10 of its SuperBlades in only 7U of rack space. The improved density comes out to 40% in a 42U setup comprising 60 SuperBlades.

But you can access such innovations and many other unique features only if you are willing to bind your setup entirely to that vendor and its components.

The enclosure defines the physical envelope for all the other compute decisions that you can make. HPE blades are not compatible with Dell’s enclosures; Cisco’s Fabric Interconnect and Fabric Extender modules work only with that specific line of server chassis. The same goes for add-on cards, drive caddies, power supplies, and even rail kits, even when vendors adopt a standardized interface.

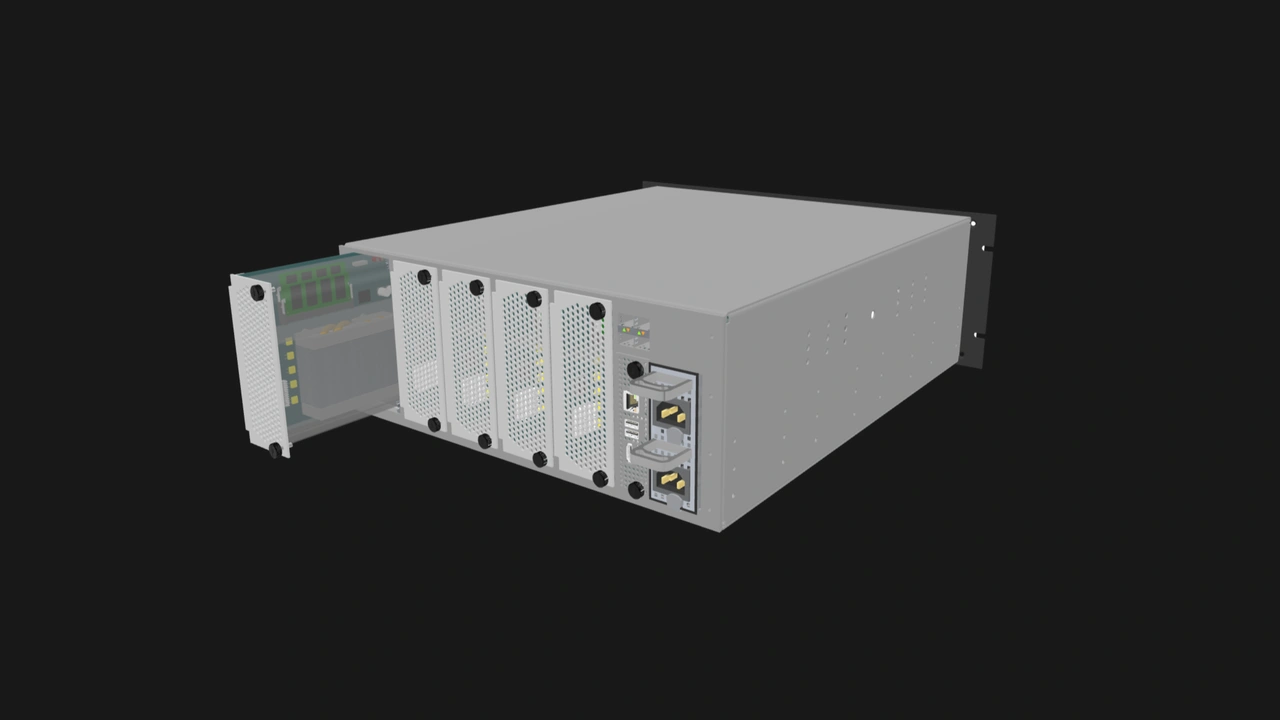

OpenSFF addresses physical and mechanical lock-in by pairing the SFF-TA-1002 4C+ Connector with strict dimensions and retention systems for the Compute Node and Management Module slots in Enclosures. A Compute Node from one vendor will fit and work in an Enclosure from a different vendor, provided both are compatible implementations of our specifications. In short, the physical layer cannot be used as a lock-in mechanism in OpenSFF systems.

Management plane lock-in

This mechanism grows the deepest and strongest roots into your setup. Numerous customers have learned the hard way that the vendor’s marketing and promises paint only the tiniest portion of your resulting operations should you commit to their platform.

Practically all modular server vendors promise unified management, quick deployments, and an array of monitoring and scaling capabilities. Dell says its OpenManage Enterprise - Modular Edition (ONE-M) software suite allows customers to manage “all servers, storage, and networking from a single IP address”, scaling to up to 20 PowerEdge MX chassis from a single console. HPE markets OneView as a management solution that provides “seamless integration with a broad partner ecosystem.” Cisco’s Unified Computing System (UCS) Manager is the gateway to the company’s pooled resource paradigm.

In all cases, the number one takeaway is that the management plane is not just a piece of software sitting on top of your hardware. It is figuratively and at times literally embedded in the enclosure. ONE-M not only requires Dell’s PowerEdge MX9002m management module, it manages only servers that are built on the MX7000 chassis. HPE OneView’s “integration with a broad partner ecosystem” is a loaded statement to say the least, because the software works only with HPE hardware. To enable resource pooling, Cisco’s UCS Manager controls server identity itself: MAC addresses, WWNs, and UUIDs. It is possible to revert a UCS system to a traditional 1:1 server mapping setup, but this process is neither straightforward nor quick.

For high performance and stable workloads, these inseparable management tools can be highly efficient and convenient. In the case of Cisco UCS and similar platforms designed for composable infrastructure, they go beyond management to being key enablers of the architecture. Unfortunately, this integration does not extend to expenditure.

These management tools are often segmented into tiers, where even essential features such as IP-KVM are locked behind license fees, separate from the tens of thousands of dollars already spent on hardware. HPE goes a step further with its ProLiant servers, locking firmware updates behind support contracts. This practice appears to have been in place for at least a decade now, despite a disgruntled customer’s compelling argument against the additional expense: if there is a bug in the firmware, why should customers have to pay for the vendor’s mistake?

While major server vendors adopt the Redfish standard for management, they create extensions that enable many of the aforementioned platform-specific capabilities. Redfish only reduces the floor of lock-in; it cannot prevent vendors from raising the ceiling.

OpenSFF discourages prescriptive management approaches. The Management Module is indeed required to manage an OpenSFF system, but we will provide a default management software to vendors along with related specifications for compatible implementations. A vendor can still adopt current practices and require additional fees to unlock the full capabilities of its Management Module. But customers will have the option to swap that vendor’s module for another vendor’s offering. They may lose some features, but they can also gain access to ones that were previously paywalled. Our intent is to keep users in control of their hardware, not the other way around.

Upgrade path lock-in

Vendors market their modular server platforms as long-term infrastructure investments. To some extent, their track records support this claim.

Enclosures such as the PowerEdge MX7000, HPE Synergy 12000 Frame, and Cisco UCS 5108 have supported or still support several generations of server blades. The Cisco chassis in particular supported UCS servers from 2009 to 2025, a 16-year run that also comes with maintenance releases until the end of 2028.

But the hardware and software integration that made this multi-generational support possible is also the limiting factor. Newer generations of blades often add asterisks to compatibility, including additional hardware, new management tools, and downtime. For instance, HPE’s long-running Synergy 12000 Frame enclosure is compatible with the Synergy Gen11 compute modules, but it requires fully populating the enclosure’s fan slots with its model-specific High Capacity Fans. Upgrading to Synergy Gen12 modules is a more intensive process that involves relocating storage controllers, fiber channel HBAs, and switch modules.

It goes without saying that the physical and mechanical lock-ins hamper existing users’ future options. But the management plane lock-in also compounds these upgrade constraints. Vendors are increasingly transitioning access to management tools from one-time license fees to subscription periods.

OpenSFF aims to provide users with truly modular and flexible upgrade paths by decoupling the life cycles of Compute Nodes, Management Modules, and Enclosures from each other. Our standard will eventually result in incompatibilities across hardware versions as technology and needs evolve, but it does not fundamentally lock one component to another’s generation.

More importantly, our vendor-agnostic approach will allow users to mix and match components, which should result in more varied and competitively priced options. This flexibility should also extend to server management, because the Management Module is also vendor-agnostic and user-replaceable.

Build with OpenSFF

Existing modular server platforms offer convenience, density, and integration in exchange for costly commitments within a constrained ecosystem. This has historically been sensible for large organizations that can afford to have dedicated IT teams and well-defined workloads.

The equation falls apart at the edges. Organizations large enough to harness server blades, but still cannot fully justify the heavy capital and operational expenses attached to that power. Businesses that are still small yet rapidly growing, and thus have more dynamic ceilings. Establishments with diverse deployments that need equally varied hardware.

For companies that are already locked to one vendor platform, the friction also shows up when it is time to upgrade. It is rarely as simple as buying and swapping in one component, and existing hardware and agreements can often be devalued by vendor decisions rather than their functionality or the organization's needs.

How independent should your computing performance be? To what extent should your management platform dictate your hardware and expenses? What does it actually mean for you to upgrade your IT infrastructure? Your answers to these questions will help you choose a suitable commitment. Our answers to these questions shaped our standard.

We encourage you to read our specifications, and we would be grateful if you help spread the word about OpenSFF. For technical clarifications, partnerships, and other inquiries, reach out to our development team at [email protected].

Other Articles

Meet OpenSFF: an open hardware standard that enables cross-vendor compatibility, modular systems, and sustainable hardware reuse.

August 11, 2025

We go over the rise of virtualization and the open software adopted by home server enthusiasts, as well as the current challenges and the future of the hobby.

September 06, 2025

Learn why OpenSFF adopted the SFF-TA-1002 connector standard and how it enables our vision.

September 18, 2025