Blog

Command & Cluster: Operating Systems for OpenSFF

Introduction

In our previous article, we explored the capabilities of one to three-node OpenSFF systems when equipped with compatible modern x86 hardware. We hope that discussion gave you a good idea about our standard’s compatibility with operating systems, platforms, and environments. That is to say, it is no different than any PC or server.

Only smart Management Modules are required to run on Raspberry Pi OS. Everything running on a Compute Node is up to the user. The more useful question is therefore which OS or platform best fits your organization’s workload, skills, experience, or deployment model. With that in mind, we came up with a list of hypervisors, managed edge hyperconverged infrastructure (HCI) platforms, and container-native OS layers that we believe fit our intended use cases for OpenSFF systems.

Hypervisors

For general-purpose server consolidation, mixed OS environments, or legacy applications, Type-1 hypervisors are the natural fit.

Proxmox Virtual Environment (VE)

This popular open-source hypervisor is built on Debian Linux, combining Linux Kernel-based Virtual Machine (KVM) for full virtualization and Linux containers (LXC) for native container support. You can manage both VMs and containers via its web interface or REST API.

Proxmox VE has modest requirements: a 64-bit CPU with hardware virtualization, at least 2GB RAM, and any form of storage. It uses the Corosync protocol to create and manage clusters. Ceph allows for hyperconverged NVMe storage on clusters with at least three nodes and are connected via at least 10GbE.

Licensing is Proxmox VE’s strongest differentiator. You can use the complete platform, including high availability, clustering, live migration, and Ceph integration at no cost. Subscriptions provide access to an enterprise repository and premium support channels, and are charged per CPU socket.

With its well-known and customizable Debian base, as well as its active forum and third-party communities, it is no wonder that many homelab enthusiasts and small business owners gravitate towards Proxmox VE.

XCP-ng

This open-source fork of Citrix XenServer is our recommendation for those looking for a more enterprise-grade architecture. XCP-ng is entirely managed by XAPI, which uses a pool as its primary unit, instead of an individual host.

This hypervisor is therefore best suited for users who will manage multiple groups of OpenSFF systems. Within each pool, one host acts as a pool coordinator that keeps track of the entire pool’s state. This allows for more robust live migration between pools with large numbers of nodes, high availability configurations that are set at the pool level instead of per node, and pool-wide storage accounting that allows for incremental backups. However, XCP-ng has no native support for containerization, requiring users to run containers inside VMs.

Despite its enterprise-class design, XCP-ng’s hardware requirements are similar to Proxmox VE. Its developers are also working with server vendor Ampere to add support for ARM SoCs.

Similar to Proxmox VE, XCP-ng is also completely free to use, and its subscription plans also mainly provide priority access to formal support channels. Consistent with the platform’s intended users, the subscription plans are more cost-efficient for medium to large infrastructures.

Windows Server 2025 with Hyper-V

If your organization depends on Windows applications or servers, Microsoft’s hypervisor is your best option. Unfortunately, this integration has a steep price tag. Not only are the subscription plans charged per CPU core instead of per socket, the base plan allows for only two virtual machines. Customers can create unlimited virtual machines only if they sign up for the more expensive Datacenter Edition license, which costs several thousands of dollars for a 16-core system.

HCI platforms

For users managing distributed systems such as retail, industrial or office branches, unified software-defined platforms can significantly streamline operations.

Scale Computing

Scale Computing is a well-known HCI provider. Its HyperCore system is a proprietary platform based on Linux KVM. It uses a storage engine that presents data on the web interface as a single pool, while intelligently distributing data and performing backups across all VM disks. It also has a smart caching feature for clusters with mixed flash and mechanical storage.

HyperCore can be licensed on its own, but orchestration involves a separate license. Scale Computing also has an all-in-one bundle that includes bare metal hosting. With its advanced features, orchestration for up to 50,000 clusters, and convenient bundle, Scale Computing’s offerings are sensible for organizations with hundreds or even thousands of locations.

Tekkio

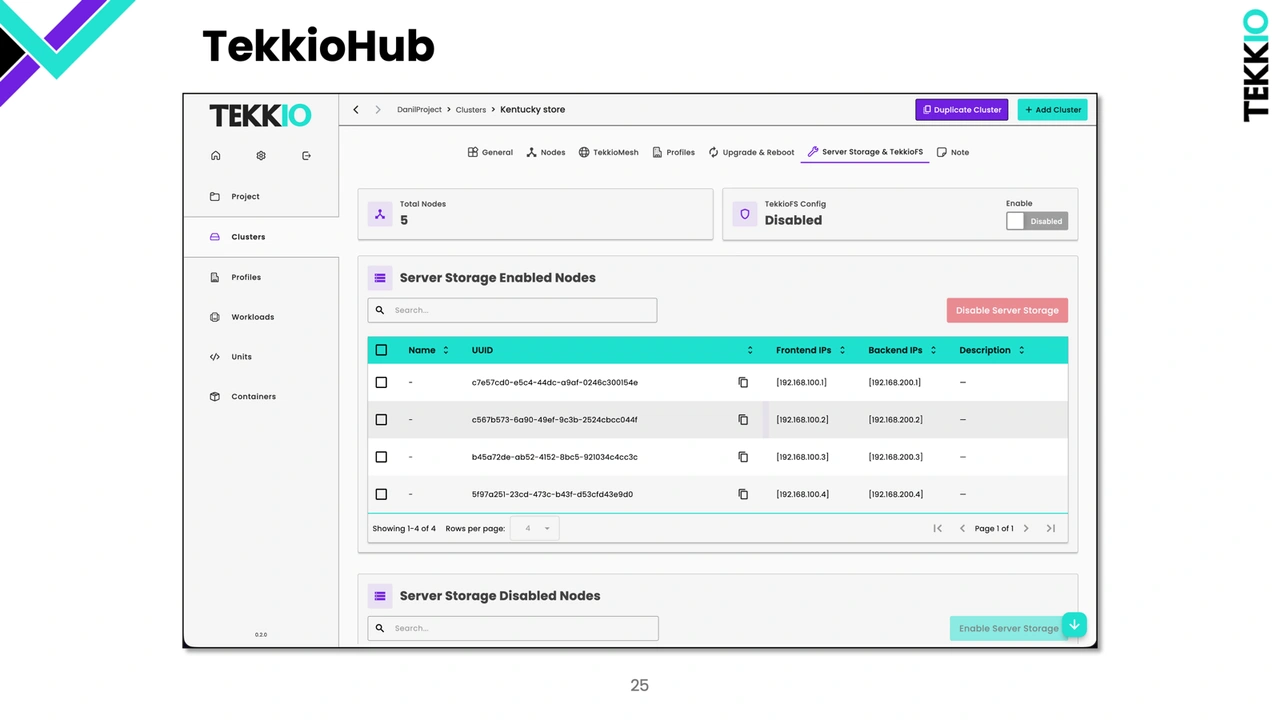

Tekkio is a newly established HCI edge platform that caters to smaller organizations. It has only one set of licensing tiers, all of which include TekkioHub, its management and reporting platform. It also has an affordable tier for US-based not-for-profit organizations.

Tekkio is designed to run even on modest hardware and suboptimal network and power conditions, a significant benefit for organizations that are starting out or have diverse hardware deployed. It also supports both VMs and Docker-compatible containers, making it a great option for hybrid or container-heavy workloads.

Container-native Linux environments

If your workloads are already containerized, you may want to avoid the hypervisor overhead by choosing Linux environments purpose-built for container orchestration. The following examples all have incredibly lightweight hardware requirements and support both x86-64 and ARM64 systems.

Talos Linux

This Kubernetes-focused environment has no SSH, interactive shell, or package manager. Talos Linux is purely API-driven and is configured via YAML. It is immutable, i.e. users cannot permanently alter its root filesystem, trading customization for stability and security. Its static nature also involves complete system replacements during updates. Previous versions are stored in a separate partition, making it easy to revert in case the latest version causes issues. Talos Linux is best for users who prioritize security over customization and are purely running Kubernetes.

Flatcar Container Linux

Flatcar is a community-maintained open-source fork of CoreOS, one of the first container-optimized Linux distributions. Unlike Talos, Flatcar provides SSH access and allows users to install their container manager of choice. Both Talos and Flatcar are immutable, though Flatcar’s filesystem can be remounted as read-write. If you need Docker compatibility or a more mature ecosystem, Flatcar is a great choice.

Red Hat MicroShift

Designed to run on Red Hat Enterprise Linux, Red Hat Microshift is a Kubernetes environment meant for low-powered hardware operating in remote locations under suboptimal network conditions. It provides only the API and features directly related to deploying applications, such as security and runtime controls. This focused design also applies to its scalability, or lack thereof: it is only for single-node endpoints. This commercially supported build also requires subscribing to Red Hat Device Edge. That said, it has a community build that can be freely used for evaluation and development.

Build with OpenSFF

These recommendations reflect our target use cases for compatible implementations of OpenSFF systems. Users will have a great degree of control and flexibility over their software stack, and our vendor-neutral standard is uniquely positioned to embrace future systems and platforms.

We invite you to read our specifications, and we would be grateful if you spread the word about OpenSFF. For technical clarifications, collaborations, and other inquiries, reach out to our development team at [email protected].

Other Articles

Meet OpenSFF: an open hardware standard that enables cross-vendor compatibility, modular systems, and sustainable hardware reuse.

August 11, 2025

We go over the rise of virtualization and the open software adopted by home server enthusiasts, as well as the current challenges and the future of the hobby.

September 06, 2025

Learn why OpenSFF adopted the SFF-TA-1002 connector standard and how it enables our vision.

September 18, 2025